Bayesian trajectory replay

Programming robots to execute a complex task can easily lead to convoluted state machines that are difficult to design, tune, and maintain. Learning by demonstration aims at solving this problem by leveraging demonstration data. We propose a non-parametric Bayesian model for learning robotics behavior by demonstration.

Our aim is to tackle complex tasks composed of multiple steps. We experiment with different tasks on several robots equiped with various sensors.

Reference: conference article.

3D navigation for ground robots

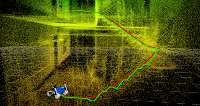

All robots need to avoid obstacles, but with significant movement capabilities over rough terrain it becomes difficult to distinguish obstacles from necessary support. Instead of separating the perception, path-planning, and path-execution stages of classical navigation approaches, we propose to tightly integrate them.

Our algorithm takes a 3D point cloud as input and, given the current position of the robot and the goal, uses D*-lite search algorithm to do online replanning on a lazy tensor representation. We also choose the best flipper configuration based on the local structure and the motion direction in order to increase stability and help with the crossing of obstacles.

References: conference paper, software.

Past research projects

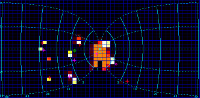

Bayesian model of eye-movement selection

Multiple Object Tracking is a common task of psychophysics where subjects are asked to keep track of a set of targets moving among a set of distractors. The classical experiment requires the gaze to be fixed in the center of the screen but, by lifting this constraint, one can study eye movement selection. We have proposed a Bayesian model of eye movements in such a task inspired from the Bayesian Occupancy Filter. This model follows the log-complex retinotopic mapping observed in the superior colliculus. With this model we were able to show that uncertainty plays an important role in the decision of the occular saccades.

References: journal article, conference article software.

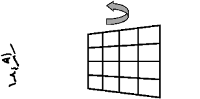

Human perception of shape from motion

For my PhD, I studied the perception of planes from the optic flow. Structure from motion is an ill-posed inverse problem as many combinations of object and relative motion can lead to the same stimulus. Assuming the rigidity of the object is a common constraint that can help solve this problem. However, in case of motion of the observer, this assumption cannot explain the bias shown in psychophysics studies. A competing assumption is the stationarity of the object. I have proposed to use Bayesian programming in order to fuse both the rigidity and the stationarity hypothesis in a single model. This model can successfully account for a wide variety of experimental findings, including the effect of shear or of the field of view.

References: Phd thesis (in French), journal article, book chapter.